Mitarbeiter

Deutsches Zentrum für Luft- und Raumfahrt (DLR)

Institut für Robotik und Mechatronik

Perzeption und Kognition

Oberpfaffenhofen

Münchener Str. 20

82234 Weßling

| Telefon: | +49-81 5328 2482 |

| E-Mail: | Dr.-Ing. Klaus H. Strobl |

| URL: | http://www.robotic.dlr.de/Klaus.Strobl/ |

| E-Mail: | Diese E-Mail-Adresse ist vor Spambots geschützt! Zur Anzeige muss JavaScript eingeschaltet sein! |

| Room: | Building 135, Room 2219 (how to reach us). |

Résumé

Klaus Strobl is a research scientist and group leader (sensor algorithms) at the Institute of Robotics and Mechatronics of the German Aerospace Center (DLR) in Oberpfaffenhofen, Germany, since December 2002. His research interests include computer vision, 3-D graphics, camera calibration, mobile robotics, and deep learning. Klaus received his Ph.D. summa cum laude in electrical engineering in 2014 from Technische Universität München and has also studied at the Universidad de Navarra, the Vienna University of Technology, and the Norwegian University of Science and Technology. In 2009, he was a visiting researcher at the Department of Computing, Imperial College London, which led to a best paper finalist award at the 2009 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2009) for his work on efficient motion estimation from images. Klaus is regular reviewer for the main international conferences and journals in the fields of robotics, computer vision, and aerospace (e.g., IEEE Transactions on Robotics, IEEE Transactions on Pattern Analysis and Machine Intelligence, IEEE Transactions on Automation Science and Engineering, IEEE Robotics and Automation Magazine, IEEE Robotics and Automation Letters, International Journal of Robotics Research, Journal of Field Robotics, Elsevier Robotics and Autonomous Systems, Elsevier Measurement, Elsevier Computers in Industry, Springer Machine Vision and Applications, and ASME Journal of Mechanisms and Robotics), a program committee member at Robotics: Science and Systems 2012, and an expert for the German Research Foundation (DFG).

Research Interests

-

3-D Modeling by laser triangulation

- Image processing

- Error modeling

- Real-time data fusion under uncertainty

-

Calibration of

- cameras (both pinhole and plenoptic, narrow and wide)

- hand-eye and head-eye

- eye-tracking display cameras

- pico projectors

- laser stripe profiler

- laser-range scanner

- PMD

- IMU

-

Visual pose tracking by

- visual odometry

- SLAM

- bundle adjustment

- Active vision for humanoid walking

-

Machine learning

- Deep learning

- Hardware:

- Software:

Activities

- Oct 17th, 2023. Wolfgang Stürzl and I were awarded the Manfred-Fuchs-Innovationspreis 2023 for the long-time development of the camera calibration toolbox DLR CalLab and its licensing to the Liebherr Group (dlr news).

- Nov 29th, 2020. Best paper award at ACCV 2020 for Manuel Stoiber efficiently tracking known 3D objects in 6D; he tuned tracking cost functions to best suit the Newton optimizer and abide by its assumptions, achieving great efficiency at that -- with all the perks that this brings along. Check the code repo here. Congratulations Manuel!

- Dec 3-5, 2019. We're organizing the 5th Machine Learning Workshop (ML-5) at DLR Oberfaffenhofen, Germany, with 125 attendees.

- May 22, 2019. The METERON JUPVIS Justin contribution was judged the best video of the ICRA 2019 -- huge teamwork! Watch it here.

- Mar 2-9, 2019. IEEE Aerospace Conference in Big Sky, MT, USA. We introduced the use of plenoptic cameras for hand-lens imaging in robotic planetary exploration.

- Oct 25, 2018. My ICCV 2011 contribution "More Accurate Pinhole Camera Calibration with Imperfect Planar Target" (paper, poster) has been merged into the OpenCV master branch -- to be released with OpenCV 4.0.0. Thank you Wenfeng for your work. See: Pull request, added function interface.

- Sep 8-14, 2018. ECCV 2018 at home. Welcome to Munich!

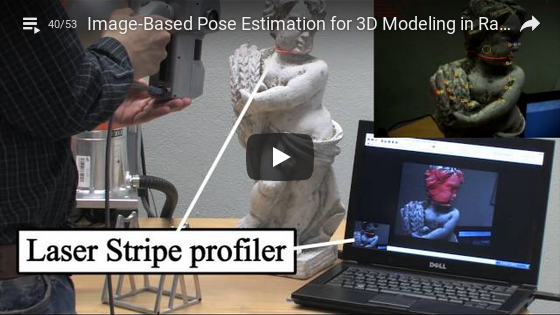

- Mar 15, 2018. Our work "Portable 3-D Modeling using Visual Pose Tracking" has been accepted for publication in the Elsevier Journal Computers in Industry. Our contribution summarizes the state of the art in close-range portable 3-D modeling systems and outlines our own implementation, the DLR 3-D Modeler, which uses passive visual pose tracking on natural features for online data registration.

- Mar 13, 2018. 19th MEON Workshop, Oberpfaffenhofen, Germany. "Portable 3-D Modeling using Visual Pose Tracking." I presented the published method above.

- Oct 23, 2017. We'll attend the final presentation of the activity "Plenoptic Refocusing Methods for 3D Vision-Based Relative Navigation" at ESA's European Space Research and Technology Centre (ESTEC) in Noordwijk, the Netherlands.

- Oct 11-12, 2017. We are presenting our deep learning endeavors for robotic perception at the 1st WissensAustauschWorkshop KI@DLR at Luftwaffenunterstützungsgruppe Wahn (Bundeswehr) in Cologne, Germany.

- Jun 20-22, 2017. 14th Symposium on Advanced Space Technologies in Robotics and Automation (ASTRA 2017) in Leiden, the Netherlands. Our colleagues of the Spaceflight Technology Division at the German Space Operations Center (GSOC) presented our joint work on the calibration of PMD cameras for on-orbit satellite servicing using the camera calibration software DLR CalDe and DLR CalLab.

- Apr 6, 2017. The German Patent and Trade Mark Office (DPMA) granted us the patent DE102015120965.9 on the metric calibration of plenoptic cameras as in the CVIU'16 publication below. The owner of the patent is the DLR; potential licensees please refer to the technology marketing department of the DLR.

- Mar 4-11, 2017. IEEE Aerospace Conference in Big Sky, MT, USA. We'll introduce the use of plenoptic cameras during on-orbit satellite servicing.

- Oct 8-16, 2016. ECCV 2016 in Amsterdam -- looking forward to catch up on old friends!

- May 19, 2016. Best Reviewer Award (aka most dutiful, pro bono blue-collar worker) by the Conference Editorial Board of the IEEE 2016 International Conference on Robotics and Automation (ICRA 2016) in Stockholm, Sweden. Special thanks to the associate editors involved.

- Mar 3-4, 2016. 15th MEON Workshop, Oberpfaffenhofen, Germany. "Stepwise Calibration of Focused Plenoptic Cameras." I'll present the published method below.

- Dec 22nd, 2015. Our work "Stepwise Calibration of Focused Plenoptic Cameras " has been accepted for publication in the Elsevier Journal of Computer Vision and Image Understanding. Our contribution deals with the metric calibration of plenoptic cameras, which are the kind of monocular, passive depth sensors that we believe can make a big difference in many domains, especially in mobile robotics.

- Dec 8th, 2014 at 3:20pm. Oral presentation titled "Loop Closing for Visual Pose Tracking during Close-Range 3-D Modeling" at the "10th International Symposium on Visual Computing (ISVC 2014)," ballroom 3 at Monte Carlo Resort & Casino, 3655 Las Vegas Blvd S, Las Vegas, NV, USA.

- Jul 4, 2014 at 10am. PhD defense at the main campus of the Technische Universität München, room 1977, building 0509 (invitation letter). President of the board of examiners: Prof. Dr.-Ing. Wolfgang Kellerer. Examiners: Prof. Dr.-Ing. Klaus Diepold, Prof. Dr.-Ing. Gerd Hirzinger, and Prof Andrew J Davison. Final evaluation: Summa cum laude (with highest distinction, unanimously).

- Jul 8, 2013 at 3pm. Introductory talk on visual, simultaneous localization and mapping at the Institute for Data Processing (LDV), Technische Universität München, room number 0938.

- Jun 24, 2013 at 3pm. Introductory talk on calibration of cameras and others sensors at the Institute for Data Processing (LDV), Technische Universität München, room number 0938.

- Program comittee member at the 2012 Robotics: Science and Systems Conference (RSS 2012).

- Nov 6-13, 2011. ICCV 2011 in Barcelona, Spain. I'll be presenting a novel, simple method for camera calibration that increases accuracy in the predominant case of using planar calibration patterns.

- Sep 22-23, 2011. 6th MEON Workshop, Oberfaffenhofen, Germany. "More Accurate Camera Calibration using the Novel Methods in DLR CalLab Version 1.0." We are presenting the novel methods and features of DLR CalLab v. 1.0 (coming soon here).

- May 9-13, 2011. ICRA 2011 in Shanghai, China. We presented improved tracking for the self-referenced DLR 3D-Modeler.

- Nov 9-1, 2010. VISION 2010 fair trade in Stuttgart, Germany. VISION is reportedly the world's most important trade fair for machine vision. The self-referenced DLR 3D-Modeler is to be featured at Hall 6-B 56.

- Oct 24, 2010. Tag der offenen Tür (open day) at DLR Oberpfaffenhofen, Germany. We are located in the foyer of building 124 (Vorstandsgebäude).

- Jun 8-11, 2010. Automatica fair in Munich, Germany. We shall display many of our research results -- this year it will be massive!

- Apr 23, 2010. Invited talk at Department of Mechanical and Mechatronics Engineering, Universidad de Monterrey, Monterrey, Nuevo León, México.

- Apr 20, 2010. Invited talk at Facultad de Física e Inteligencia Artificial, Universidad Veracruzana, Xalapa, Veracruz, México.

- Apr 19-23, 2010. Hannover Messe in Hanover, Germany. We are presenting the self-referenced DLR 3D-Modeler.

-

Apr 14-17, 2010. Plenary talk at the 8th International Symposium of Mechatronics Engineering "Automatización y Tecnología 6," at Instituto Tecnológico y de Estudios Superiores de Monterrey, Monterrey, Nuevo León, México: "Flexible 3-D Modeling as a Key Technology for the Breakthrough of Robotics."

Scientists strive to maximize the immediate performance improvement in their particular fields of expertise. This maximum efficiency paradigm achieves significant improvements in a short period of time and leads to cutting-edge technologies and highly specialized devices. Ambitious technological goals however, like those enabling groundbreaking new industries like service robotics, invariably call for a wide range of technologies---these often turn out to be mutually restricting. Furthermore those higher goals may impose fundamental constraints like reduced cost, smaller size or lower weight. These were often not even considered during the development of the required technologies following the maximum efficiency paradigm. [read more] - Mar 18-19, 2010. 3rd MEON Workshop, Berlin, Germany. "Schnelle, leichte und akkurate Kalibrierung mit DLR CalDe und DLR CalLab" and "Bildbasierte Selbstlokalisierung des DLR 3D-Modellierers."

- Oct 2009. Invited talk at the Dexterous Robotics Laboratory at NASA, Johnson Space Center, Houston, TX, USA: "Present Mechatronic Developments at the Institute of Robotics and Mechatronics of the German Aerospace Center (DLR)," with Thomas Wimböck.

- Oct 2009. IROS 2009, St. Louis, MO, USA. Best paper finalist award... nice!

- Jun-Oct 2009. Visiting researcher at the Robot Vision Research Group, Department of Computing, Imperial College London, London, UK, with Prof Andrew Davison.

- ...

Publications (Google Scholar profile)

PhD thesis

K. H. Strobl.

A Flexible Approach to Close-Range 3-D Modeling.

At Chair for Data Processing, Technische Universität München. Submitted on Sept 16th, 2013. Approved on June 6th, 2014. Defense on July 4th, 2014. Final evaluation: Summa cum laude.

BibTeX entry - Compressed file (7.4 MB)  - @mediaTUM - Supplementary videos: EXOMARS 1, 2, 3, 4, DEOS, Justin, and the self-referenced DLR 3-D Modeler 1, 2.

- @mediaTUM - Supplementary videos: EXOMARS 1, 2, 3, 4, DEOS, Justin, and the self-referenced DLR 3-D Modeler 1, 2.

Articles, conference papers, preprints

M. Denninger, D. Winkelbauer, M. Sundermeyer, W. Boerdijk, M. W. Knauer, K. H. Strobl, M. Humt, and R. Triebel.

BlenderProc2: A Procedural Pipeline for Photorealistic Rendering.

Journal of Open Source Software, 8 (82), Seite 4901. doi: 10.21105/joss.04901. ISSN 2475-9066.

Paper  - elib.

- elib.

L. Oliva Maza, F. Steidle, J. Klodmann, K. H. Strobl, and R. Triebel.

An ORB-SLAM3-based Approach for Surgical Navigation in Ureteroscopy.

Computer Methods in Biomechanics and Biomedical Engineering: Imaging and Visualization. Taylor & Francis. doi: 10.1080/21681163.2022.2156392. ISSN 2168-1163.

Paper  - elib.

- elib.

G. Lafruit, L. Van Bogaert, J. Sancho Aragón, M. Panzirsch, G. Hirt, K. H. Strobl, and E. Juárez Martínez.

Tele-Robotics VR with Holographic Vision in Immersive Video.

In: IXR '22: Proceedings of the 1st Workshop on Interactive eXtended Reality, Seiten 61-68. Association for Computing Machinery, New York, NY, United States. 1st Workshop on Interactive eXtended Reality (IXR '22), 14 Oct 2022, Lisboa, Portugal. doi: 10.1145/3552483.3556461. ISBN 978-1-4503-9501-4.

Paper  - elib.

- elib.

L. Meyer, K. H. Strobl, and R. Triebel.

The Probabilistic Robot Kinematics Model and Its Application to Sensor Fusion.

In: 2022 IEEE/RSJ International Conference on Intelligent Robots and Systems, IROS 2022. 2022 IEEE/RSJ International Conference on Intelligent Robots and Systems, 23-27 Oct 2022, Kyoto, Japan. doi: 10.1109/IROS47612.2022.9981399. ISBN 978-166547927-1. ISSN 2153-0858.

Paper  - elib.

- elib.

M. Lingenauber, D. Seyfert, Ch. Nissler, and K. H. Strobl.

Plenoptic Cameras as Depth Sensors for the Precise Arm Positioning of Planetary Exploration Rovers.

In: 71. Deutschen Luft- und Raumfahrtkongress 2022 (DLRK 2022). 71. Deutschen Luft- und Raumfahrtkongress 2022 (DLRK 2022), 27-19 Sep 2022, Dresden, Germany.

elib.

M. Stoiber, M. Pfanne, K. H. Strobl, R. Triebel, and A. Albu-Schäffer.

SRT3D: A Sparse Region-Based 3D Object Tracking Approach for the Real World.

International Journal of Computer Vision, 130 (4), Seiten 1008-1030. Springer. doi: 10.1007/s11263-022-01579-8. ISSN 0920-5691.

Paper  - elib.

- elib.

I. Ballester, A. Fontan, J. Civera, K. H. Strobl, and R. Triebel.

DOT: Dynamic Object Tracking for Visual SLAM.

In: 2021 IEEE International Conference on Robotics and Automation, ICRA 2021, Seiten 11705-11711. IEEE. 2021 IEEE International Conference on Robotics and Automation (ICRA), 30 May - 05 June 2021, Xi'an, China. doi: 10.1109/ICRA48506.2021.9561452. ISBN 978-172819077-8. ISSN 1050-4729.

Paper  - Preprint at arXiv.org - elib.

- Preprint at arXiv.org - elib.

L. Meyer, K. H. Strobl, and R. Triebel.

Robust Vision-Based Pose Correction for a Robotic Manipulator Using Active Markers.

In: 17th International Symposium on Experimental Robotics, ISER 2020, Seiten 533-542. Springer International Publishing. Experimental Robotics, 14-18 Nov 2021, Malta. doi: 10.1007/978-3-030-71151-1_47. ISBN 978-3-030-71150-4.

Paper  - elib.

- elib.

M. Stoiber, M. Pfanne, K. H. Strobl, R. Triebel, and A. Albu-Schäffer.

A Sparse Gaussian Approach to Region-Based 6DoF Object Tracking.

Proceedings of the Asian Conference on Computer Vision (ACCV 2020), Kyoto, Japan, Nov 30-Dec 4, 2020, oral contribution, best paper award.

BibTeX entry - Paper  - Supplementary - Videos - Code - elib.

- Supplementary - Videos - Code - elib.

I. Azqueta-Gavaldon, F. Fröhlich, K. H. Strobl, and R. Triebel.

Segmentation of Surgical Instruments for Minimally-Invasive Robot-Assisted Procedures Using Generative Deep Neural Networks.

Preprint at arXiv.org. Jun 5th, 2020.

M. Smisek, M. Schuster, L. Meyer, M. Vayugundla, F. Schuler, B.-M. Steinmetz, M. G. Müller, W. Stürzl, M. Bihler, J. Langwald, D. Seth, P. Schmaus, N. Y.-S. Lii, P. Kenny, A. Lund, K. J. Höflinger, K. H. Strobl, T. Bodenmüller, and A. Wedler.

Into the Unknown - Autonomous Navigation of the MMX Rover on the Unknown Surface of Mars' Moon Phobos.

ICRA 2020 Workshop on Opportunities and Challenges in Space Robotics, 1.-4. Jun. 2020, Paris, France.

Poster  - elib.

- elib.

N. Y. Lii, D. Leidner, P. Schmaus, T. Krueger, A. Schiele, A. S. Bauer, R. Bayer, S. Bertone, D. Burdulis, R. Burger, J. Dietl, A. Dietrich, A. Giuliano, G. Grunwald, T. Gumpert, M. Heumos, V. Hofbauer, P. Knudson, E. Korner, A. Maier, A. Meissner, T. Mueller, U. Müllerschkowski, M. Neves, J. Nolan, K. Pasay, A. Pereira, M. Pfau, B. Pleintinger, P. Reisich, F. Schmidt, K. H. Strobl, L. Suchenwirth, F. Wappler, and A. Albu-Schäffer.

The METERON SUPVIS Justin Orbit-to-Ground Experiment: A Glimpse into the Future of Astronaut-Robot Collaboration.

Proceedings of the IEEE International Conference on Robotics and Automation (ICRA 2019), Montreal, Canada, video contribution, May 2019, best video award.

M. Lingenauber, U. Krutz, F. A. Fröhlich, Ch. Nißler, and K. H. Strobl.

In-Situ Close-Range Imaging with Plenoptic Cameras.

Proceedings of the 2019 IEEE Aerospace Conference, Big Sky, MT, USA, March 2-9 2019, https://doi.org/10.1109/AERO.2019.8741956.

BibTeX entry - Paper  - @ieeexplore - elib.

- @ieeexplore - elib.

M. Lingenauber, U. Krutz, F. A. Fröhlich, Ch. Nißler, and K. H. Strobl.

Plenoptic Cameras for In-Situ Micro Imaging.

Proceedings of the European Planetary Science Congress 2018, Berlin, Germany, September 16-21 2018.

Paper  - Poster

- Poster  - elib.

- elib.

K. H. Strobl, E. Mair, T. Bodenmüller, S. Kielhöfer, T. Wüsthoff, and M. Suppa.

Portable 3-D Modeling using Visual Pose Tracking.

Computers in Industry, Volume 99, August 2018, pp. 53-68, ISSN 0166-3615, https://doi.org/10.1016/j.compind.2018.03.009.

BibTeX entry - Preprint  - Paper

- Paper  - @ScienceDirect - elib.

- @ScienceDirect - elib.

U. Krutz, M. Lingenauber, K. H. Strobl, F. A. Fröhlich, and M. Buder.

Diffraction Model of a Plenoptic Camera for In-Situ Space Exploration.

Proceedings of the SPIE Photonics Europe 2018 Conference, Volume 10677, Straßburg, France, April 24-26 2018.

Paper  - Poster

- Poster  - elib.

- elib.

K. Klionovska, H. Benninghoff, and K. H. Strobl.

PMD Camera- and Hand-Eye-Calibration for On-Orbit Servicing Test Scenarios on the Ground.

Proceedings of the 14th Symposium on Advanced Space Technologies in Robotics and Automation (ASTRA 2017), Leiden, The Netherlands, June 20-22 2017.

elib

M. Lingenauber, K. H. Strobl, N. W. Oumer, and S. Kriegel.

Benefits of Plenoptic Cameras for Robot Vision during Close Range On-Orbit Servicing Maneuvers.

Proceedings of the 2017 IEEE Aerospace Conference, Big Sky, MT, USA, March 4-11 2017, pp. 1-18, https://doi.org/10.1109/AERO.2017.7943666.

BibTeX entry - @ieeexplore - elib.

K. H. Strobl and M. Lingenauber.

Stepwise Calibration of Focused Plenoptic Cameras.

Computer Vision and Image Understanding (CVIU), Volume 145, April 2016, pp. 140-147, ISSN 1077-3142, http://dx.doi.org/10.1016/j.cviu.2015.12.010.

BibTeX entry - Preprint  - Paper

- Paper  - @ScienceDirect - elib.

- @ScienceDirect - elib.

Note the typo in Eq. (8): use -(h11·h12+h21·h22) instead of -h11·h12+h21·h22 .

K. H. Strobl.

Loop Closing for Visual Pose Tracking during Close-Range 3-D Modeling.

In G. Bebis et al. (Eds.): ISVC 2014, Part I, LNCS 8887, pp. 390-401. Springer International Publishing Switzerland (2014).

BibTeX entry - Paper  - Supplementary video - elib.

- Supplementary video - elib.

K. H. Strobl and G. Hirzinger.

More Accurate Pinhole Camera Calibration with Imperfect Planar Target.

Proceedings of the IEEE International Conference on Computer Vision (ICCV 2011), 1st IEEE Workshop on Challenges and Opportunities in Robot Perception, Barcelona, Spain, pp. 1068-1075, November 2011.

BibTeX entry - Paper  - Supplementary material

- Supplementary material  - Poster (size A0)

- Poster (size A0)  - elib - Internal implementation in DLR CalDe and DLR CalLab, externally validated by calibrel (OpenCV-based), and merged into OpenCV (pull request #12772, added function interface).

- elib - Internal implementation in DLR CalDe and DLR CalLab, externally validated by calibrel (OpenCV-based), and merged into OpenCV (pull request #12772, added function interface).

K. H. Strobl, E. Mair, and G. Hirzinger.

Image-Based Pose Estimation for 3-D Modeling in Rapid, Hand-Held Motion.

Proceedings of the IEEE International Conference on Robotics and Automation (ICRA 2011), Shanghai, China, pp. 2593-2600, May 2011.

BibTeX entry - Paper  - Videos - elib.

- Videos - elib.

E. Mair, K. H. Strobl, T. Bodenmüller, M. Suppa, and D. Burschka.

Real-time Image-based Localization for Hand-held 3D-modeling.

KI – Künstliche Intelligenz, vol. 24, no. 3, pp. 207-214, May 2010.

BibTeX entry - elib.

K. H. Strobl, E. Mair, T. Bodenmüller, S. Kielhöfer, W. Sepp, M. Suppa, D. Burschka, and G. Hirzinger.

The Self-Referenced DLR 3D-Modeler.

Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2009), St. Louis, MO, USA, pp. 21-28, October 2009, best paper finalist.

BibTeX entry - Paper  - Videos - elib.

- Videos - elib.

K. H. Strobl, W. Sepp, and G. Hirzinger.

On the Issue of Camera Calibration with Narrow Angular Field of View.

Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2009), St. Louis, MO, USA, pp. 309-315, October 2009.

BibTeX entry - Paper  - elib.

- elib.

E. Mair, K. H. Strobl, M. Suppa, and D. Burschka.

Efficient Camera-Based Pose Estimation for Real-Time Applications.

Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2009), St. Louis, MO, USA, pp. 2696-2703, October 2009.

F. Lange, K. H. Strobl, J. Langwald, S. Jörg, G. Hirzinger, B. Gruber, J. Klein, and J. Werner.

Kameragestützte Montage von Rädern an kontinuierlich bewegte Fahrzeuge.

VDI-Berichte 2012 (Robotik 2008), Munich, Germany, pp. 155-158, June 2008, in German.

BibTeX entry - Abstract  - Paper

- Paper  .

.

K. H. Strobl and G. Hirzinger.

More Accurate Camera and Hand-Eye Calibrations with Unknown Grid Pattern Dimensions.

Proceedings of the IEEE International Conference on Robotics and Automation (ICRA 2008), Pasadena, California, USA, pp. 1398-1405, May 2008.

BibTeX entry - Paper  .

.

M. Suppa, S. Kielhoefer, J. Langwald, F. Hacker, K. H. Strobl, and G. Hirzinger.

The 3D-Modeller: A Multi-Purpose Vision Platform.

Proceedings of the IEEE International Conference on Robotics and Automation (ICRA 2007), Rome, Italy, pp. 781-787, April 2007.

K. H. Strobl and G. Hirzinger.

Optimal Hand-Eye Calibration.

Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2006), Beijing, China, pp. 4647-4653, October 2006.

BibTeX entry - Paper  .

.

K. H. Strobl, W. Sepp, E. Wahl, T. Bodenmüller, M. Suppa, J. F. Seara, and G. Hirzinger.

The DLR Multisensory Hand-Guided Device: The Laser Stripe Profiler.

Proceedings of the IEEE International Conference on Robotics and Automation (ICRA 2004), New Orleans, LA, USA, pp. 1927-1932, April 2004.

BibTeX entry - Paper  - Videos (MP4): Meshing, calibration.

- Videos (MP4): Meshing, calibration.

J. F. Seara, K. H. Strobl, E. Martin, and G. Schmidt.

Task-Oriented and Situation-Dependent Gaze Control for Vision Guided Humanoid Walking.

Proceedings of the 3rd IEEE-RAS International Conference on Humanoid Robots (Humanoids2003), Munich and Karlsruhe, Germany, October 2003.

BibTeX entry - Paper

J. F. Seara, K. H. Strobl, and G. Schmidt.

Path-Dependent Gaze Control for Obstacle Avoidance in Vision Guided Humanoid Walking.

Proceedings of the IEEE International Conference on Robotics and Automation (ICRA 2003), Taipei, Taiwan, pp. 887-892, September 2003.

BibTeX entry - Paper

J. F. Seara, K. H. Strobl, and G. Schmidt.

Information Management for Gaze Control in Vision Guided Biped Walking.

Proceedings of the IEEE/RSJ/GI International Conference on Intelligent Robots and Systems (IROS 2002), Lausanne, Switzerland, pp. 31-36, October 2002.

BibTeX entry - Paper

O. Lorch, J. F. Seara, K. H. Strobl, U. D. Hanebeck, and G. Schmidt.

Perception Errors in Vision Guided Walking: Analysis, Modeling, and Filtering.

Proceedings of the IEEE International Conference on Robotics and Automation (ICRA 2002), Washington DC, USA, pp. 2048-2053, May 2002.

BibTeX entry - Paper

J. F. Seara, O. Lorch, and G. Schmidt.

Gaze Control for Goal-Oriented Humanoid Walking.

Proceedings of the 2nd IEEE-RAS International Conference on Humanoid Robots (Humanoids2001), Waseda, Tokio, Japan, pp. 187-195, November 2001.

BibTeX entry - Paper

Internal reports

K. H. Strobl.

Parametrizable, Task-Dependent Gaze Control for Vision Guided Autonomous Walking.

Master's Thesis, Lehrstuhl für Steuerungs- und Regelungstechnik, Technische Universität München, Germany, May 2002.

BibTeX entry - Thesis  - Extension

- Extension  - Videos (DivX): dead-reckoning, dead-reckoning+measurements, obstacle avoidance, self localization, and a mixture of them.

- Videos (DivX): dead-reckoning, dead-reckoning+measurements, obstacle avoidance, self localization, and a mixture of them.

Internship reports

K. H. Strobl and O. Kristiansen.

MovingCam, Technical Documentation - Threeplex.

THREEPLEX Project, Work Package 5, Task 5.3. Report 32.1023.00/07/03 28p. 2apps. NTNU Multiphase Flow Laboratory and SINTEF Petroleum Research, Trondheim, Norway, 2003.

K. H. Strobl.

A Testing Set for Piezoelectric Ultrasonic Microphones.

Microelectronics and Microsystems Department, CEIT, San Sebastián, Spain, August 2001.

Patents

-

DE102010004233B3: [EN] Method for determining position of camera system with respect to display, involves determining geometric dimensions of object and calibration element, and determining fixed spatial relation of calibration element to object. [DE] Verfahren zur Bestimmung der Lage eines Kamerasystems bezüglich eines Objekts.

The method involves recording an image with an image content by a camera system (2) for an undetermined position of a mirror (4). A parameter of an imaging function is determined from image information of a part of the image content, where the imaging function characterizes imaging of an object point in an image storage of the camera system. Geometric dimensions of an object i.e. display (3), and a calibration element i.e. calibration pattern (6), are determined, and fixed spatial relation of the calibration element to the object is determined.

-

DE102015120965.9: [DE] Verfahren und Vorrichtung zur Ermittlung von Kalibrierparametern zur metrischen Kalibrierung einer plenoptischen Kamera.

[DE] Die Erfindung betrifft ein Verfahren und eine Vorrichtung zur Ermittlung von Kalibrierparametern zur metrischen Kalibrierung einer plenoptischen Kamera. Das Verfahren zeichnet sich dadurch aus, dass mit der plenoptischen Kamera zumindest ein Kalibriermuster mit bekannter Geometrie aus N verschiedenen Perspektiven zur Erzeugung von Bilddatensätzen BDi,TB und BDi,2D mit i = 1, 2, ..., N und N ≥ 1 aufgenommen wird, wobei BDi,TB Tiefenbilder sind, die jeweils Tiefeninformationen v(Sx, Sy) an Projektionspunkten Sx, Sy in der Kamera wiedergeben, und BDi,2D Totalfokusbilder sind, die jeweils Helligkeitsinformationen an den Projektionspunkten Sx, Sy in der Kamera wiedergeben; ausschließlich basierend auf den Bilddatensätzen BDi,2D, der Detektorelementgröße p und der bekannten Geometrie des Kalibriermusters mit den Merkmalen M ein Ermitteln folgender Kalibrierungsparameter KP1 erfolgt: Brennweite f der Hauptlinse L, und einer optimal fokussierten Tiefe Czf(M) von Projektionen der geometrischen Merkmale M des Kalibriermusters innerhalb der Kamera, und ausschließlich basierend auf den Bilddatensätzen BDi,TB und den Kalibrierungsparametern KP1 ein Ermitteln folgender Kalibrierungsparameter KP2 erfolgt: eines Abstandes h zwischen einer Bezugsebene des Multi-Linsenarrays MLA und einer Bezugsebene der Hauptlinse L, und ein Ermitteln eines Abstandes b zwischen der Bezugsebene des Multi-Linsenarrays MLA und einer Bezugsebene des Detektors DET erfolgt.

Student projects

- DLR CalLab - Reprogramming from Matlab into IDL and Extensions (Internship, awarded to Mr. Cristian Paredes 16.05.2005-14.10.2005).

- Evaluation of omnidirectional camera calibration methods, extension to stereo omnidirectional camera calibration, and C++ implementation of 3-D reconstruction methods for omnidirectional cameras (Internship, awarded to Mr. Michal Smisek 20.6.2011-9.9.2011).

- Development and Implementation of New Image Processing Methods for Robust Laser Profiler Operation (Diploma-Thesis, awarded).

- Stereo Light Stripe Profiler with Redundancy Check for Robust 3-D Modeling (Internship, awarded).

- Implementation of an Algorithm for Simultaneous Localization and Mapping using RGB(-D) Data and a Wheeled Mobile Robot's Odometry; Adaptation to Indoor Navigation (MSc Thesis, free).

- 3-D Modeling Using a Monocular Plenoptic Camera (MSc Thesis, free).

- Extension of the Lens Distortion Model for Cameras with Wide Angular Field of View (MSc Thesis or student internship, free).

Personal

- LSR crew.

- Decision Theory.

- "Ray Kurzweil: How technology's accelerating power will transform us," Feb 2005, at TED.com.

- Robert Laws: Experience shapes perception.

- Amazing experiments on augmented reality (AR) by the Russians here.

© DLR - Institute of Robotics and Mechatronics. All rights reserved.