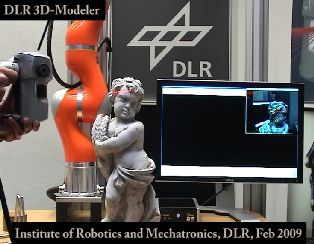

Dr.-Ing. Klaus H. Strobl ‒ iros2009

Complementary videos to the IROS 2009 publication "The Self-Referenced DLR 3D-Modeler."

This is the accompanying video:

strobl_iros09_video.avi (codec: msmpeg4v2, size: 4.9 MB)

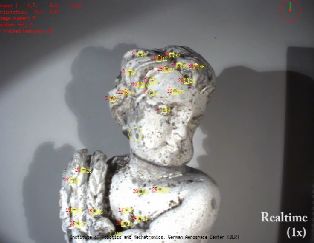

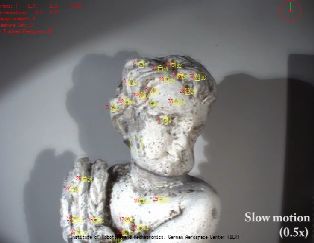

These videos show the challenging sequence detailed in the section "Experiments," both in real-time and in slow motion:

challenging_100.avi (codec: msmpeg4v2, size: 9.2 MB)

challenging_50.avi (codec: msmpeg4v2, size: 19 MB)

In this video we show vision-based ego-motion estimation, laser profiling, and online mesh generation (by Tim Bodenmüller) proceeding simultaneously, on the same computer, and subsequent texturing (by Wolfgang Sepp):

meshing.avi (codec: msmpeg4v2, size: 5.4 MB)

This is the video corresponding to the accuracy experiments mentioned in the paper. The 3D-Modeler was mounted on a robotic manipulator Kuka KR16, which, along with the hand-eye calibration, represented ground-truth. No IMU was used for the image flow prediction; instead, image flow extrapolation was used.

neuschw_acc.avi (codec: msmpeg4v2, size: 12MB)

The next videos focus on the tracking performance in relation to the used image flow prediction scheme. The algorithm estimates the pose of the camera at the same time, at 25 Hz. In the first video neither IMU data nor image flow extrapolation is used: the starting feature locations for tracking correspond to the last tracked positions of the features or, if the feature was lost, to the expected projection of the feature using both the known structure and the last pose estimation available. The tracking is very unstable. Only very slow motion is allowed. Optical flow over 7-8 pixels is not tolerated anymore, which is very easily reached in rotation:

tracking_last.avi (codec: msmpeg4v2, size: 3.0MB)

The second video estimates the last image flow for all features and subsequently adds it to their last tracked positions. This is called image flow extrapolation. Performance is good in translation but not in rotation because the variations in the rotational part of the image flow are bigger than in the translational part:

tracking_optical.avi (codec: msmpeg4v2, size: 5.8MB)

In the last video we show the satisfactory performance of the hybrid prediction scheme presented in the paper in highly-dynamic conditions. IMU data are used for the prediction of the rotational image flow for every feature. For the estimation of the translational image flow, first the last translational image flow is being estimated (from the last image flow as from the tracking results, and from the last predicted rotational image flow from the IMU). The current translational image flow prediction corresponds to the extrapolation of the last translational image flow. This hybrid image flow is, again, added to the last tracked positions of the features:

tracking_hybrid.avi (codec: msmpeg4v2, size: 3.5MB)

by Klaus Strobl, on February 25th, 2009.